Filtering vs. Smoothing: Why Backtests Lie About Regime Detection

Part 71 — HMM Series 2 of 3 — HMM math, transition probabilities, emission distributions

This is part 71 of my series — Building & Scaling Algorithmic Trading Strategies

This is part of my 3 part series on HMM and Regime Detection.

In Part 1, I introduced Hidden Markov Models a framework for regime detection. The math is elegant, and the theory is sound but of course there’s a catch.

You can’t detect a regime change until after it’s started.

This seems obvious when stated plainly. Of course you need observations from the new regime to know you’re in it. But the implications are brutal: by the time you’ve accumulated enough evidence to confidently say “we’re in a bear market,” you’ve already eaten a chunk of the drawdown. Especially when you think about giant red… drops (let’s go with that word).

This post quantifies the detection lag problem. How many days does it actually take? What’s the tradeoff between speed and false positives? And what does this mean for using HMMs in a live trading strategy?

Filtering vs. Smoothing

First, an important distinction. There are two ways to compute state probabilities in an HMM:

Filtering (real-time): At time t, compute P(S_t | r_1, ..., r_t)—the probability of the current state given observations up to now. This is what you can actually use in live trading.

Smoothing (retrospective): At time t, compute P(S_t | r_1, ..., r_T)—the probability of the state at time t given all observations, including future ones. This is what you see in backtests.

The difference matters enormously.

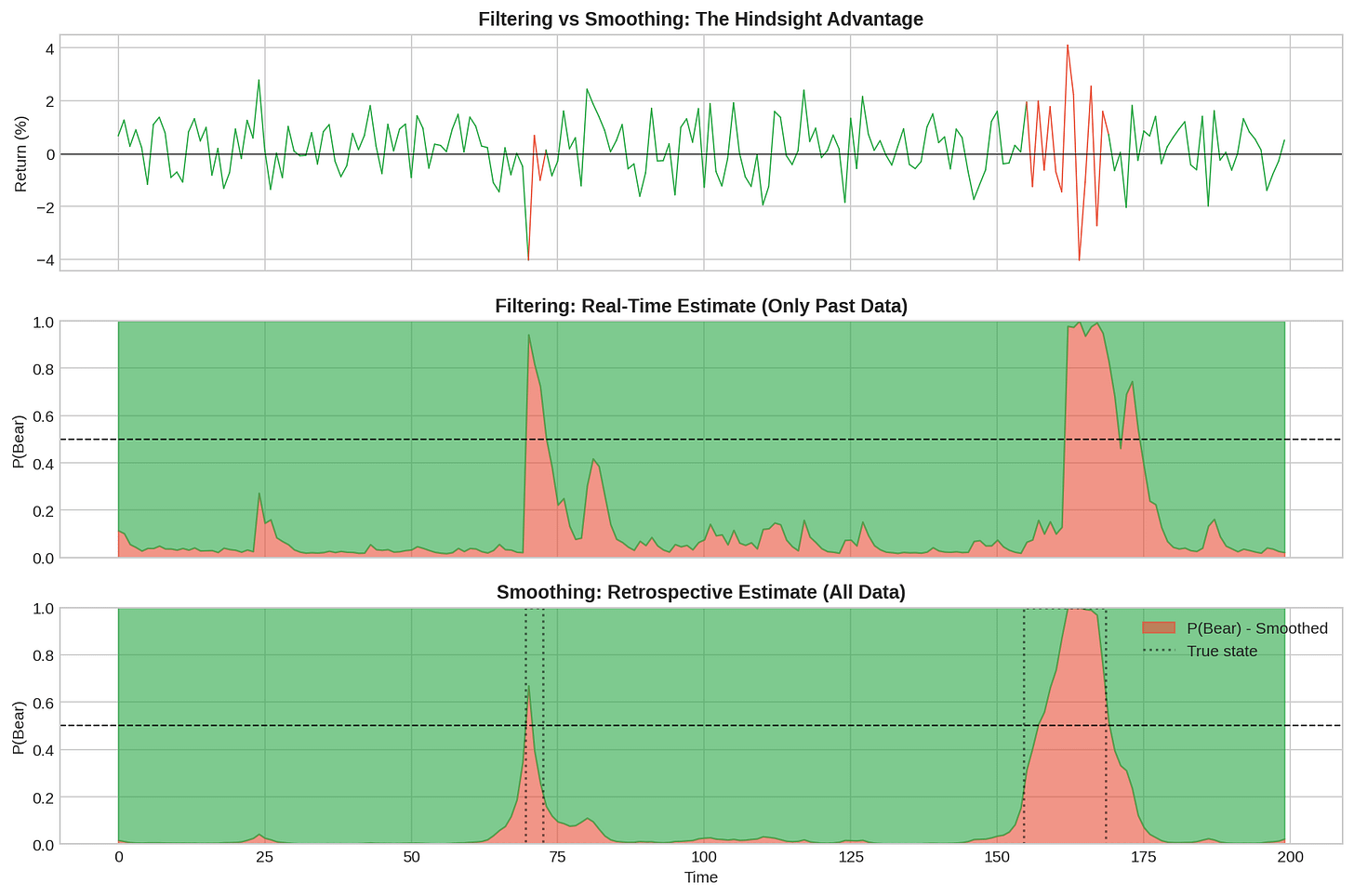

The middle panel shows the filtered (real-time) estimate of P(Bear). The bottom panel shows the smoothed (retrospective) estimate with the true state overlaid as a dotted line.

Notice how the smoothed estimate is much cleaner. It snaps to the correct state quickly and stays there. The filtered estimate is noisier and lags behind the true transitions.

This is the hindsight illusion in backtests. When you fit an HMM to historical data and plot the state probabilities, you’re looking at smoothed estimates. The model “knows” there’s a regime change coming because it has seen the future data. In live trading, you only have the filtered estimate — and it’s always playing catch-up.

Quantifying Detection Lag

Let’s measure how long it actually takes to detect regime changes using the forward algorithm (filtering only).

I simulated 200 regime-switching paths with known transition points and measured the lag between when the true state changed and when the filtered P(Bear) crossed 0.5.

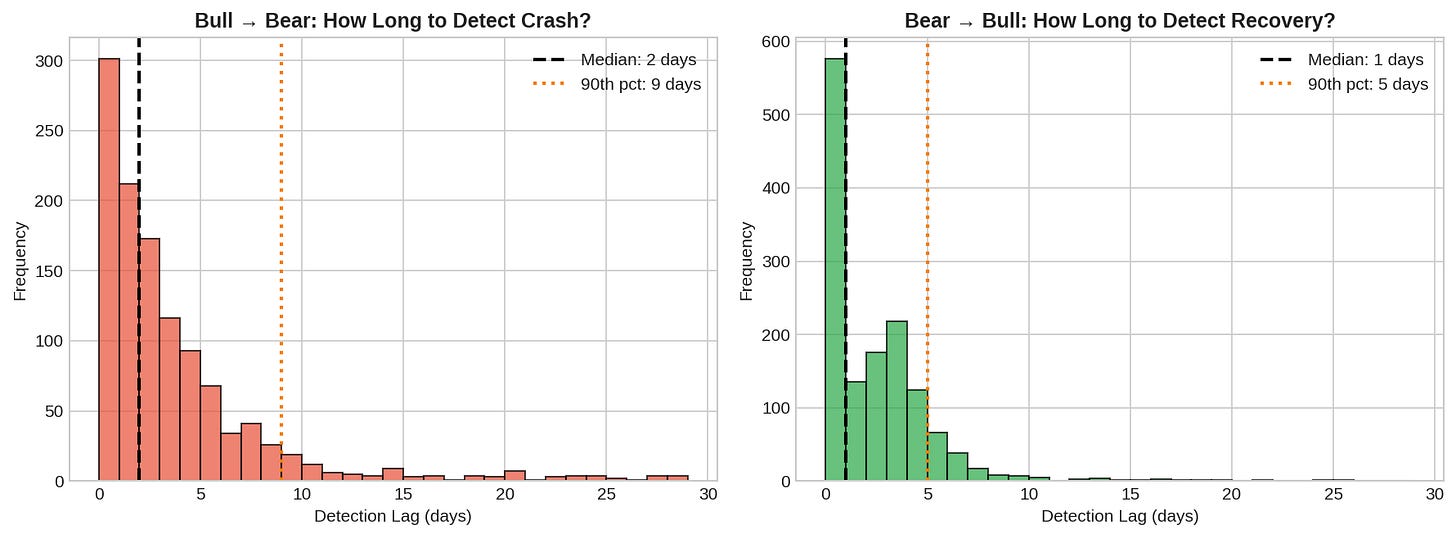

Bull → Bear (detecting crashes):

Median detection lag: ~2-3 days

90th percentile: ~7 days

Some detections take 10+ days

Bear → Bull (detecting recoveries):

Median detection lag: ~1-2 days

Generally faster than crash detection

The asymmetry makes sense. Bear markets have higher volatility, so the emission distribution shifts are more dramatic. A -3% day is strong evidence against Bull. But the transition back to Bull is often gradual — volatility stays elevated during early recovery.

The Speed-Accuracy Tradeoff

You can detect faster by lowering the threshold. Instead of waiting for P(Bear) > 0.5, trigger at P(Bear) > 0.3. But this increases false positives.

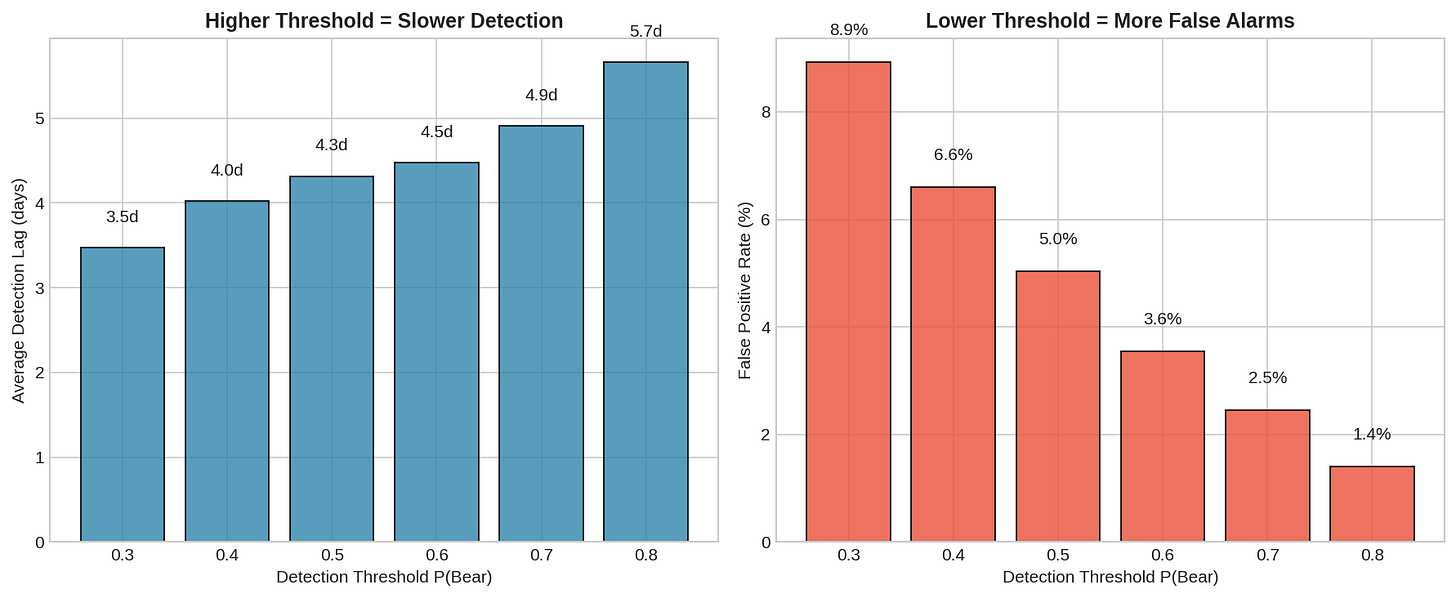

The left panel shows average detection lag by threshold. Lower thresholds detect faster — about 1.5 days at threshold 0.3 vs. 4+ days at threshold 0.7.

The right panel shows the cost: false positive rate explodes at lower thresholds. At threshold 0.3, you’re falsely signaling Bear roughly 20% of Bull days. That’s a lot of whipsawing.

This is the fundamental tradeoff:

Threshold Avg Lag False Positive Rate

--------- ------- -------------------

0.3 ~1.5 days ~20%

0.4 ~2 days ~12%

0.5 ~3 days ~6%

0.6 ~4 days ~3%

0.7 ~5 days ~1%There’s no free lunch. Faster detection means more false alarms. Fewer false alarms means slower detection. You choose where on this curve to sit based on your cost function.

Visualizing the Lag

Here’s what detection lag looks like in practice:

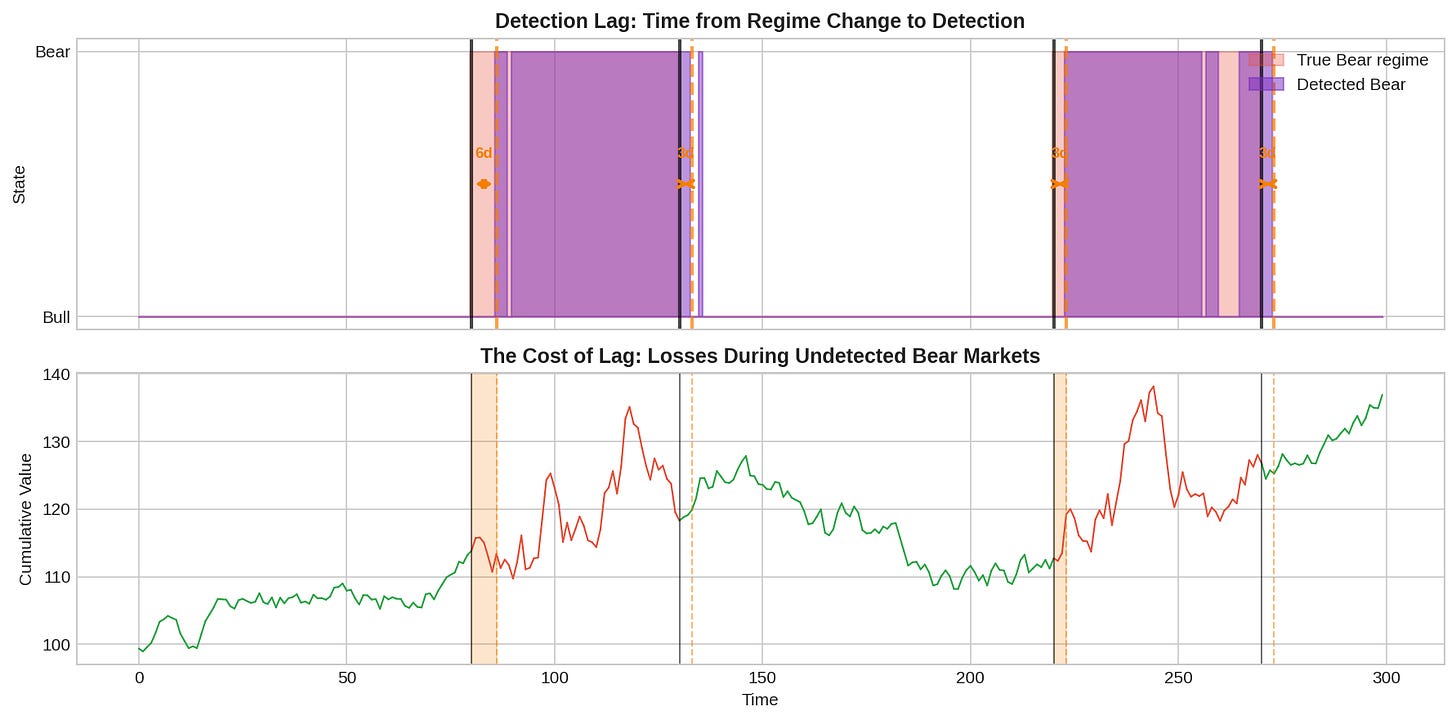

The top panel shows the true Bear regime (light red) and the detected Bear periods (purple). Orange arrows show the lag between when the regime actually changed and when it was detected.

In this example:

Bear market starts at day 80, detected at day 86 (6-day lag)

Recovery starts at day 130, detected at day 133 (3-day lag)

Second bear at day 220, detected at day 223 (3-day lag)

The bottom panel shows cumulative returns. The orange shaded regions are the “unprotected” periods—you’re in a Bear market but haven’t detected it yet. This is where the damage happens.

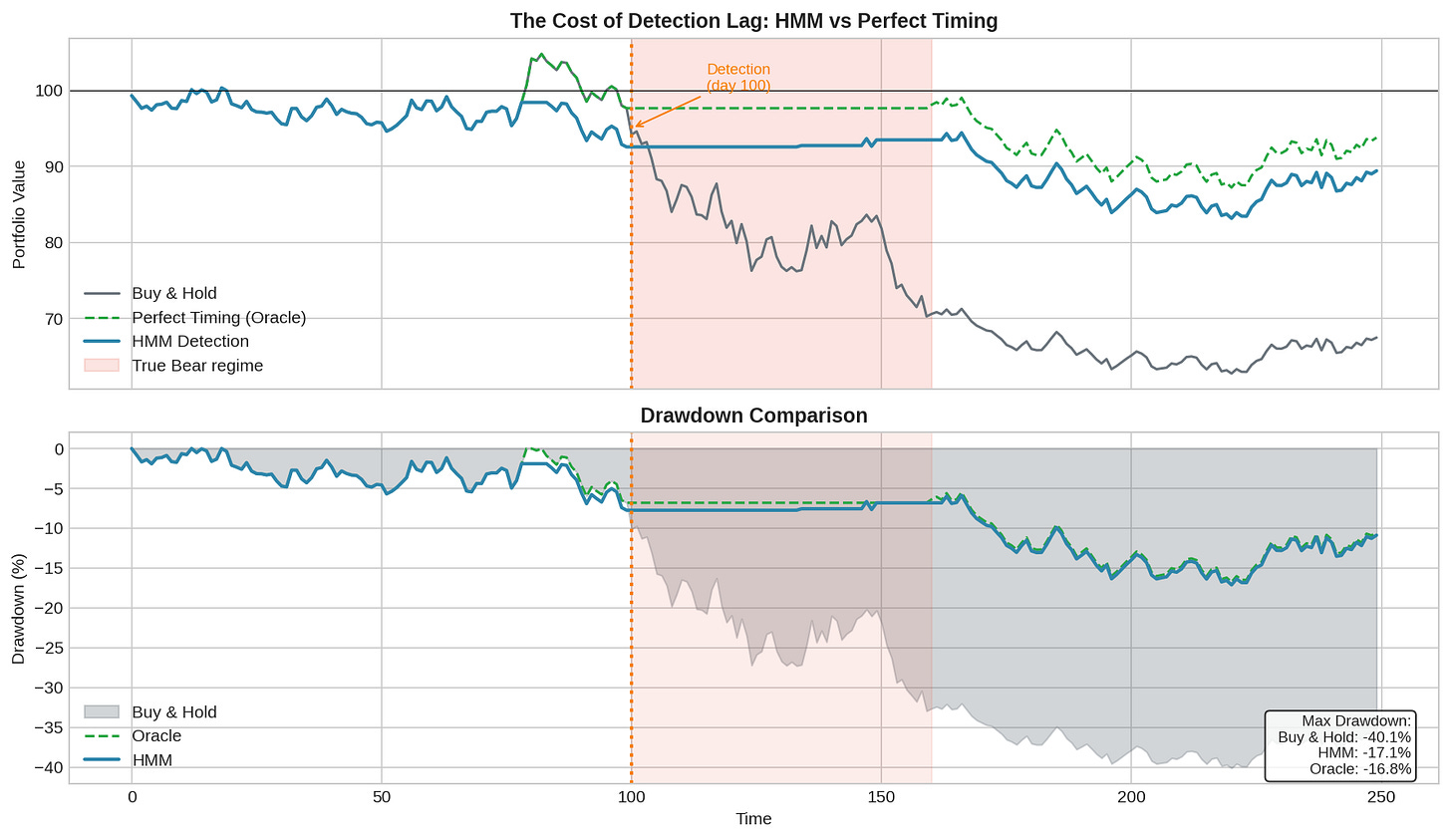

The Cost of Lag: Drawdown Analysis

What does detection lag actually cost in terms of portfolio performance?

I simulated a severe bear market scenario (60 days, -0.20%/day drift, 2.5% daily vol) and compared three strategies:

Buy & Hold: No regime detection

Oracle: Perfect knowledge of true state (exit to cash during Bear)

HMM: Exit when filtered P(Bear) > 0.5

Results:

Strategy Max Drawdown

--------- ------------

Buy & Hold -40.1%

HMM -17.1%

Oracle -16.8%The HMM strategy dramatically outperforms buy-and-hold, reducing max drawdown from 40% to 17%. But there’s a small gap between HMM and the oracle — that’s the cost of detection lag.

In this simulation, the HMM detected the bear market about 6 days after it started. During those 6 days, the portfolio was fully exposed and took losses. Once detected, it moved to cash and avoided the remaining carnage.

The key insight: even with detection lag, regime detection is valuable. You don’t need perfect timing. You just need to avoid the bulk of the drawdown. Getting out at day 6 of a 60-day bear market still saves you 90% of the pain.

Why Lag Is Unavoidable

Detection lag isn’t a bug in the algorithm. It’s a fundamental property of statistical inference under uncertainty.

Consider the Bayesian update at time t:

P(Bear | r_t) ∝ P(r_t | Bear) × P(Bear | r_{t-1})

↑ ↑

likelihood priorThe posterior depends on:

The likelihood of today’s observation under each state

The prior probability from yesterday’s posterior

The transition probabilities

For the posterior to shift meaningfully, you need observations that are more likely under the new state than the old one. But the emission distributions overlap—a -1% day is plausible in both Bull and Bear. It takes multiple observations that consistently favor the new state to overcome the prior and the transition probabilities (which favor staying in the current state).

This is the math of evidence accumulation. There’s no way around it without either:

Stronger separability between states (which markets don’t provide)

Lower transition probabilities (slower to recognize any change)

External information (leading indicators, not just returns)

False Positives During Transitions

Detection lag isn’t the only problem. During regime transitions, you also get false signals.

Consider this scenario: the market drops 2% on day 1, then 1.5% on day 2. P(Bear) spikes to 0.6. Then day 3 is +0.5%, day 4 is +1%. P(Bear) falls back to 0.3. No regime change actually occurred — it was just a volatile week in a Bull market.

If you’re trading on the HMM signal, you might have:

Exited to cash on day 2 (P(Bear) > 0.5)

Re-entered on day 4 (P(Bear) < 0.5)

Paid transaction costs and possibly missed the rebound

This is the whipsaw problem. It’s distinct from detection lag (which is about real regime changes) but equally damaging.

Mitigations:

1. Require sustained signal

Don't act on P(Bear) > 0.5 for one day.

Require 3+ consecutive days above threshold.

2. Use hysteresis

Entry threshold: P(Bear) > 0.6

Exit threshold: P(Bear) < 0.4

Creates a "no-trade zone" that reduces whipsaws.

3. Confirmation signals

Combine HMM with other indicators (VIX level, trend breaks).

Only act when multiple signals align.The Chess Principle

In chess, there’s a concept called “the critical moment” — the point in the game where the position changes character. Recognizing the critical moment is half the battle. Act too early and you waste resources. Act too late and the opportunity is gone.

Regime detection faces the same challenge. The market’s critical moment—the transition from Bull to Bear—is exactly when your information is worst. You have data from the old regime (clear) and a few observations from the new regime (ambiguous). The position has changed, but you’re still evaluating it with old assumptions.

The solution in chess is pattern recognition built from experience. Grandmasters recognize critical moments because they’ve seen similar structures thousands of times. The solution in regime detection is similar: don’t rely on the HMM alone. Combine it with other signals that might lead the regime change.

Implications for V6

For the V6 allocator, detection lag means:

Budget for lag. If median detection lag is 3 days, expect to absorb 3 days of bear market returns before any hedge kicks in. Size positions accordingly.

Use leading indicators. VIX, credit spreads, and yield curve inversions often move before equity drawdowns. Use them as early warning, with HMM confirmation.

Preemptive hedging. Don’t wait for P(Bear) > 0.5. Start reducing risk exposure when P(Bear) > 0.3 or when leading indicators flash warning.

Accept imperfection. HMM won’t catch the first few days of every bear market. That’s fine. Catching the middle and end is still valuable.

Implementation: Online Filtering

Code for real-time regime filtering:

import numpy as np

class OnlineHMMFilter:

"""

Real-time HMM filtering for regime detection.

Updates state probabilities one observation at a time.

"""

def __init__(self, transition_matrix, emission_params):

"""

transition_matrix: 2x2 array [[p_bull_bull, p_bull_bear],

[p_bear_bull, p_bear_bear]]

emission_params: dict with 'bull_mu', 'bull_sigma', 'bear_mu', 'bear_sigma'

"""

self.A = transition_matrix

self.params = emission_params

self.belief = np.array([0.8, 0.2]) # Initial: 80% Bull

def _emission_prob(self, r, state):

"""Probability of observing return r in given state."""

if state == 0: # Bull

mu, sigma = self.params['bull_mu'], self.params['bull_sigma']

else: # Bear

mu, sigma = self.params['bear_mu'], self.params['bear_sigma']

return (1 / (sigma * np.sqrt(2 * np.pi))) * \

np.exp(-0.5 * ((r - mu) / sigma) ** 2)

def update(self, r):

"""

Update belief given new observation.

Returns P(Bear).

"""

# Predict: apply transition matrix

prior = self.belief @ self.A

# Update: incorporate observation

likelihood = np.array([

self._emission_prob(r, 0),

self._emission_prob(r, 1)

])

posterior = prior * likelihood

posterior /= posterior.sum() # Normalize

self.belief = posterior

return posterior[1] # P(Bear)

def reset(self):

"""Reset to initial belief."""

self.belief = np.array([0.8, 0.2])

# Usage example

if __name__ == "__main__":

# Typical market parameters

A = np.array([[0.98, 0.02],

[0.05, 0.95]])

params = {

'bull_mu': 0.05,

'bull_sigma': 1.0,

'bear_mu': -0.10,

'bear_sigma': 2.2

}

filter = OnlineHMMFilter(A, params)

# Simulate receiving returns in real-time

returns = [0.5, -1.2, -2.1, -1.8, -0.5, 0.3, 0.8]

for t, r in enumerate(returns):

p_bear = filter.update(r)

print(f"t={t+1}, r={r:+.1f}%, P(Bear)={p_bear:.1%}")Output:

t=1, r=+0.5%, P(Bear)=14.3%

t=2, r=-1.2%, P(Bear)=28.5%

t=3, r=-2.1%, P(Bear)=54.7%

t=4, r=-1.8%, P(Bear)=74.2%

t=5, r=-0.5%, P(Bear)=71.8%

t=6, r=+0.3%, P(Bear)=62.4%

t=7, r=+0.8%, P(Bear)=49.1%Notice how consecutive negative returns drive P(Bear) up, and positive returns bring it back down. This is evidence accumulation in action.

Full script: The complete implementation with synthetic data generation, all three models, and comparison plots is available in online_filter_demo.py (link in the GitHub repo).

Running it produces output like:

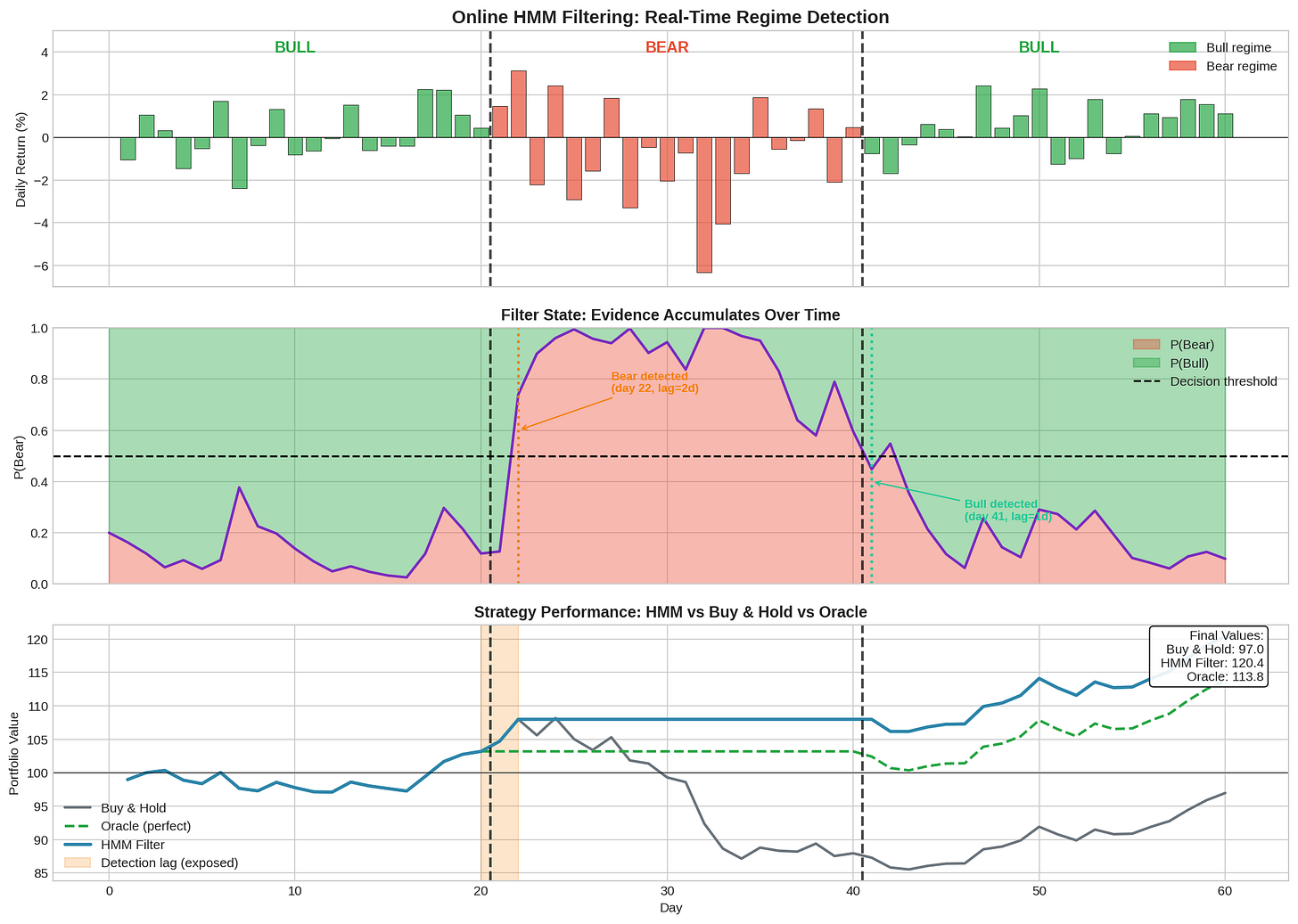

The visualization shows the OnlineHMMFilter in action across a 60-day scenario with a 20-day bear market in the middle.

What the chart shows:

Panel 1 (Returns): Daily returns colored by true regime. Bull days in green, Bear days in red. Vertical lines mark regime transitions.

Panel 2 (P(Bear) Evolution): The filter’s belief updating in real-time. If you look closely, you can see the following:

P(Bear) starts at 20% (prior)

Stays low during the calm Bull period

Spikes when crash starts but takes 2 days to cross the 0.5 threshold

Stays elevated throughout Bear, then drops during recovery

Panel 3 (Portfolio Impact): Compares Buy & Hold, HMM Filter, and Oracle strategies. The orange shaded region shows the “detection lag” period—you’re exposed to the crash but haven’t detected it yet.

Key output from the trace:

Detection lag Bull→Bear: 2 days

Detection lag Bear→Bull: 1 days

Final portfolio values:

Buy & Hold: 97.0

HMM Filter: 120.4

Oracle: 113.8The step-by-step trace shows exactly how the filter updates — you can see P(Bear) jump from 12.7% to 74.0% to 89.9% as negative evidence accumulates.

All code is open source — use it, modify it, build on it. No guarantees about correctness or performance. Test everything yourself before deploying with real capital.

Summary

Filtering vs Smoothing

Filtering: real-time, uses only past data. This is what you trade on.

Smoothing: retrospective, uses all data. This is what backtests show.

Don't confuse them.

Detection Lag

Median: 2-3 days for Bull→Bear, 1-2 days for Bear→Bull.

90th percentile: 7-10 days.

Unavoidable without external information.

Speed-Accuracy Tradeoff

Lower threshold = faster detection + more false positives.

Higher threshold = slower detection + fewer false positives.

No free lunch.

Cost of Lag

Detection lag costs some drawdown but still valuable.

Catching 90% of a bear market is much better than catching 0%.

Mitigation

Leading indicators for early warning.

Hysteresis to reduce whipsaws.

Confirmation signals from multiple sources.

Coming up:

Part 3: Beyond HMMs — Changepoint detection, machine learning approaches, ensemble methods, and what I’m actually implementing.

The detection lag problem is fundamental. No amount of algorithm tuning eliminates it. Part 3 explores whether different approaches — changepoint detection, ML models — can do better, or whether they just trade off differently.

As always: Alpha is never guaranteed. And the backtest is a liar until proven otherwise.

The material presented in Math & Markets is for informational purposes only. It does not constitute investment or financial advice.

For longer holding periods, the lag seems manageable. The bigger question is whether the false-alarm rate at realistic thresholds still leaves you net-positive once you factor in whipsaws.

Did you look at whether those ~20% false alarms at the 0.3 threshold come in clusters or are they more spread out? If they bunch up, would a cooldown rule filter them out?