Environment, Modules, and Data Flow (no code, just the blueprint)

Part 3 below focuses on the environment, modules, data flow, and setup for my project

This is part 3 of my series — Building & Scaling Algorithmic Trading Strategies

Here I talk about the practical setup behind my strategies: Python and C++ environment, project layout, modules, and how data moves (in and out of CSVs). No code, just enough detail for reproducibility and clean iteration.

It’s been a couple of years since I touched code, so a lot of this was new to me. In a previous era I’d have used a lot of StackExchange, but thankfully we have Codex and Claude now. Please feel free to ignore this if you know this already.

Python environment (Mac M2)

Version: Python 3.12

Isolation:

venv(simple, reliable). If you prefer, Poetry/uv are fine; the point is locked deps and repeatable installs.Core libs: pandas, numpy, scipy, statsmodels (for quick stats), matplotlib (for local plots), pydantic (config validation), requests/httpx (API), tqdm (progress), loguru (logging).

Reproducibility:

requirements.txt+ lock file (or Poetry lock).A single command to bootstrap on a fresh machine.

Pin versions for anything that touches data or math.

Notes for Apple Silicon: keep everything native; avoid mixing conda/pyenv unless you have a reason. The simpler the env, the fewer surprises. Trust me, I learned this the hard way.

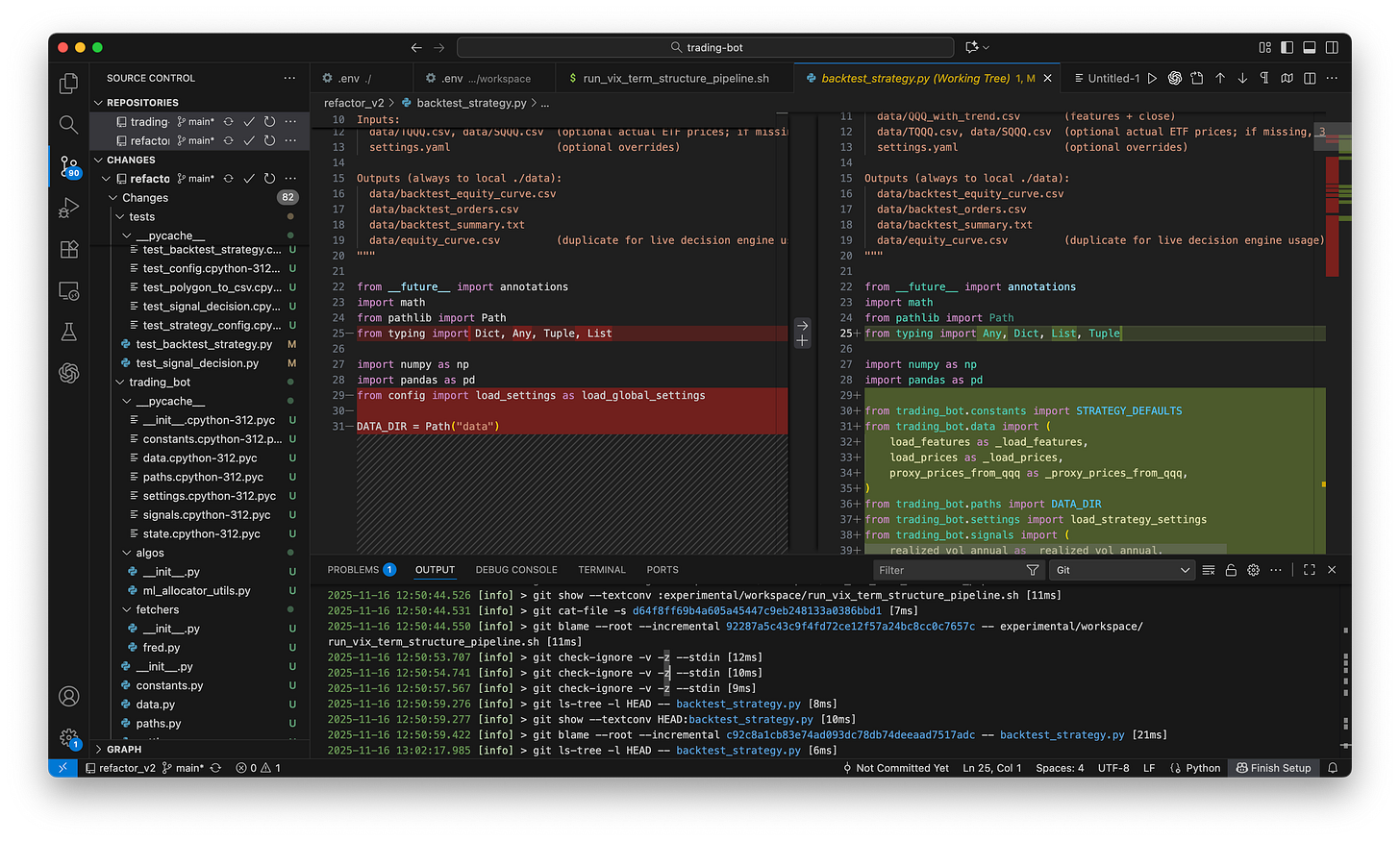

And finally -- version control, version control, version control. Within the first few hours of writing this bot, I overwrote my CSVs. I use git religiously and create backups and manage version control aggressively. I also iterate fast, and version control is a godsend in managing mistakes that’ll creep in (and trust me, they will).

Project layout (modules, not monolith)

trading-bot/

config/

settings.yaml # symbols, providers, paths, schedule, risk toggles

credentials.example.env # template for secrets (never commit the real one)

data/

raw/ # provider responses (optional cache)

curated/ # cleaned CSVs used by the pipeline

backtests/ # point-in-time snapshots and results

notebooks/ # scratch EDA only (outputs are disposable)

logs/

runtime/ # daily run logs

src/

__init__.py

accounts/ # account connector(s)

providers/ # polygon, alpaca, etc.

datastore/ # csv i/o, schemas, validation

features/ # trend metrics, transforms

signals/ # signal rules & weights

backtest/ # runners, metrics, reports

scheduler/ # daily run orchestration

utils/ # common helpers (time, tz, retry)

tests/ # quick unit tests for math & io

README.mdWhy this structure: Each piece has one job. I can run backtests without touching providers, and adjust signals without breaking I/O.

C++ Environment (Mac M2)

Compiler / toolchain:

clangvia Xcode Command Line Tools (Apple Silicon native).Standard: C++20 (good balance of features + library support).

Build & project layout:

CMakeas the build system. Simple structure:src/for core bot logic (strategies, risk, schedulers)include/for headersthird_party/for vendored libs (or FetchContent in CMake)

Out-of-source builds only (

build/directory) to keep the tree clean.

Core deps for a trading bot:

HTTP / WebSocket:

cprorcpp-httplibfor REST (Alpaca, Polygon, etc.).websocketpporuWebSocketsif you want live feeds later.

JSON:

nlohmann/jsonfor request/response parsing.

Time & scheduling:

datelibrary (Howard Hinnant) for timezone/conversions if<chrono>isn’t enough.

Math / stats:

Eigenorxtensorfor vector math and basic linear algebra.

Logging & utilities:

spdlogfor logs.fmtfor fast, clean formatting (often bundled with spdlog).

Reproducibility / setup:

One

CMakeLists.txtat the root that configures all targets and pulls in third-party libs viaFetchContentor Git submodules.A single bootstrap sequence on a new Mac:

xcode-select --installbrew install cmakecmake -S . -B build && cmake --build build

Pin library versions via submodule tags or specific

GIT_TAGinFetchContent. Don’t trackmasterfor anything that touches execution, math, or APIs.

Notes for Apple Silicon:

Stay fully ARM-native: Homebrew under

/opt/homebrew, no Rosetta unless you absolutely must.Avoid mixing multiple compilers/package managers (no half-brew, half-MacPorts, half-conda zoo). A clean

clang + CMake + brewtoolchain on M2 is boring—and that’s exactly what you want for a live trading bot.

Configuration & secrets

settings.yamlholds non-secret config: symbols, lookbacks (50/100/250), trading window (e.g., last 10 minutes), file paths, and toggles (paper/live)..envholds secrets (API keys). Load once at startup. I’m playing fast and loose for now since it’s a paper trading account but I’ll eventually need to be more secure here.Validation: pydantic (or similar) to fail fast if a path or key is missing.

Module responsibilities (high level)

accounts/

Connect to the broker (paper first).

Query balances, buying power.

(Later) route orders. For now: dry-run only.

providers/

fetch_daily_bars(symbol, start, end) from Polygon/Alpaca.

Rate-limit aware with retry/backoff.

Idempotent: same request → same file in

data/raw/(optional).

datastore/

Schema: date, open, high, low, close, volume (index/ETF may lack volume).

Strict dtypes:

date(UTC), pricesfloat64,volumenullableInt64.Read: from

data/curated/.Write: atomic (write temp → move), no partials.

Validate: monotonic dates, no duplicates, no gaps unless documented.

features/

Compute MA50/MA100/MA250 on close.

Derive velocity = MA spreads (50–100, 100–250), normalized.

Derive acceleration = Δ(velocity) over a small window.

Output back to a features CSV (same date index).

signals/

Rule set converts features → state: LONG / NEUTRAL / SHORT.

Leverage tiering based on velocity strength + (separate) vol filter.

Hysteresis/thresholds to reduce flip-flop around zero.

backtest/

Loads curated data + features (point-in-time safe).

Applies signal states → position vector.

Computes ROI, CAGR, Sharpe, Max DD, turnover, exposure.

Writes results to

data/backtests/with a manifest (params + timestamp).

scheduler/

Daily run checklist:

load config

fetch latest bars

update curated CSVs

recompute features

recompute signal

(paper) generate hypothetical orders

log + archive artifacts

Timezone: store data in UTC, display/log in America/New_York.

CSVs: simple, fast, debuggable

Curated price CSV (per symbol):

date,open,high,low,close,volume

2024-11-01, ..., ..., ..., ..., ...

...Sorted by

date, no missing days without an explicit reason.One file per symbol; consistent naming (

SYMBOL.daily.csv).

Features CSV (per symbol):

date,ma50,ma100,ma250,vel_50_100,vel_100_250,accel_50_100

...Keep derived columns narrow and explicit; no mystery fields.

If I change a formula, I bump a features_version in filename.

Backtest results (per run):

run_id,asof,params_hash,cagr,sharpe,max_dd,roi,notes

...Included the params hash so I can reproduce any chart later.

Data hygiene & integrity

Idempotent fetch: Re-running today’s job shouldn’t create duplicates. I sort by newest first and only update the latest. In a future version, I’d like to actually create a hash to verify as well.

Gaps: If a provider misses a bar, mark and carry forward; don’t invent data. I also spent a lot of time trying to figure out why a particular trade was stopped 7 days before. Well turns out a data gap in one relatively irrelevant piece of data basically meant everything stopped.

Clock discipline: Only compute today’s features after the official close timestamp; avoid using partial intraday bars in a daily system.

Audit trail: Every daily run writes a small JSON manifest (what symbols, what time, which files changed).

Logging & observability

Two logs:

logs/runtime/YYYY-MM-DD.log(human-readable)logs/runtime/YYYY-MM-DD.ndjson(machine-parseable)

Log key events: fetch window, rows added, feature ranges, signal changes, and any non-200 provider responses (with retry counts).

Testing (just enough)

Unit tests for:

MA math (edge cases: short windows, NaNs).

Velocity/acceleration correctness on small synthetic series.

CSV read/write round-trip (types and ordering).

A tiny “canary” backtest (e.g., 90 days) runs fast to catch breakage.

Daily workflow (what runs, in what order)

Fetch new daily bars (yesterday if running pre-open; same-day after close).

Curate: append, de-dup, validate.

Features: recompute last N rows (don’t recompute the world).

Signals: update trend / state + leverage tier.

(Paper) Orders: generate hypothetical trades and store.

Archive: write manifests, plots, and summaries to

data/backtests/(optional daily micro-backtest for sanity).Notify: short text summary from logs (signal state changes, risk flags).

What I’m not doing (yet)

No live order routing. Paper only.

No ML weight auto-tuning in production. Manual thresholds first, then iterate.

No database. CSVs are enough at this scale and make debugging trivial.

The information presented in Math & Markets is not investment or financial advice and should not be construed as such.